This is the third blog post in a series discussing how high-performing DevOps teams build secure systems at scale.

At the core of DevOps is the notion of sustainable, smooth flows of work. DevOps teams achieve this by creating CI/CD pipelines, orienting teams around very small but frequent code changes, getting end-to-end early, and adopting system architectures (such as those based on microservices) to support all of the above at speed.

Success with DevOps in a secure environment is made more likely if teams adapt their existing DevOps principles to also include security. A high-velocity DevOps team should never have to throw any security practices overboard to ship features faster.

Modeling and Optimizing The Security Workflow

Kanban is a work system that focuses on flow and speed of development. At its core is the visualization of work phases: as items move through different stages of work, the visualization of those states helps tease out slowness of work phases, identify bottlenecks, and optimize for speed.

An organization faced with chronic release delays may find that the security review phase is taking far too long in their own system. Usually, this is because the security reviews are being done far too late, and with too large a “batch size”. In other words: too many things land on the security team’s plate to review all at once.

Sophisticated organizations solve this problem by “moving security left”. Assuming work flows from left to right in a Kanban visualization, this means getting the security team involved much earlier in the development of new features and releases. This uncovers major security issues early in the development cycle, and that prevents delays later on.

Securing Microservices for Speed

Microservice architectures offer many benefits to security teams, especially teams that focus on their own release velocity.

Let’s take an example of a hypothetical “currency converter” microservice. It’s likely a simple task to have the security team review it and grant it access to the single database it needs. But what if that same feature was within a large monolith, written in millions of lines of code? The security team might have to detangle a lot of changes to see what is important for them to review. This gets even worse if the monolith has undergone many substantive changes between releases. Asking a security team to review a few dozen lines of security policy code is easier than having them consume and check many thousands of lines of code.

Ongoing upgrades to the code within already-enrolled microservices are very fast, as long as new secrets or permissions are not required by the new versions. Decommissioning microservices becomes a simple un-enrollment at the policy level. Decommissioning in a very large monolith is nowhere near as easy, given the amount of coupling involved, or the sheer size of the surface area needing oversight.

Traditional teams using big-bang releases of enormous monoliths tend to work in a waterfall system. Once an application makes its lumbering departure from QA, they request a security review. Then, the security team would faces the unenviable task of reviewing – in one fell swoop! – the many thousands of pertinent application changes, all while the business is applying pressure to get the application live.

Large teams who are shipping frequently are doing so by decomposing their codebases into microservices, allowing the security team to work their approval magic in a focused, fine-grained model that is conducive to speed and visibility of progress.

Going End-to-End, and Doing So Early

DevOps has embraced the concept of “getting end-to-end early”. It is a simple method of de-risking delivery and improving confidence in estimates. The faster the team can get to “connecting the pipes” in a new service, the sooner they’ll learn how to build a smooth deployment flow for making frequent upgrades and updates to it. Feature flags can hide new features that deploy to production but aren’t yet ready for full exposure to the users.

This approach also works well to de-risk the security changes required for services, especially when the team has embraced the Security Policy as Code principle.

For example, if a new service needs access to an internal CRM database and payment gateway, the team would build a CI/CD pipeline for this new service as their first task, and get a v.0.1 of it moving through that pipeline with only basic connectivity established.

The service would have its privileges already vetted and would be “pre-enrolled” to access the sensitive resources it needs once deployed in production. This de-risks the deployment of later versions where developers will be able to create the remaining features in the service, safe in the knowledge that the service’s permissions and security settings have already been set up.

Summary

High-performing DevOps teams are able to achieve high velocity and smooth flow by:

- Using a Kanban view of their development cycle to find bottlenecks and optimize them out

- Moving security “left”, and have security teams engaged early in the development cycle to find security issues early

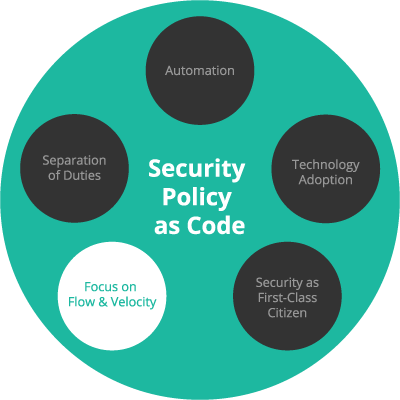

- Using a Security Policy as Code approach to have the Dev, Sec and Ops teams communicate efficiently and unambiguously

- Decomposing large applications into smaller microservices, each with their own security policy

- Reducing the “batch size” of changes as much as possible, enabling the security team to work on a smooth, predictable flow of small items

- Getting end-to-end early to de-risk the ongoing upgrades of components and modules

These principles work well in large teams of hundreds of developers and more, but they are also effective in much smaller teams. The above principles of secure development flow are always beneficial to apply, regardless of team size.

In the next post in this series, we’ll learn how all teams within high-performing organizations consider security as a “first-class citizen”, as opposed to it being an afterthought.

DevOps Security at Scale series

Brian Kelly is Head of Conjur Engineering at CyberArk, where he focuses on creating products that add much-needed security and access management to the landscape of DevOps tools and cloud systems. Brian is passionate about building teams, cybersecurity, and DevOps. Find him on Twitter at @brikelly.