Hardcoded secrets remain one of the most persistent security failures in modern software development. API keys committed to Git, tokens embedded in configuration files, credentials copied between environments. Everyone knows this is risky, yet it keeps happening because the alternatives are slow, manual, and disrupt developer flow.

Over the past months, I built an MCP server integration for CyberArk Secrets Manager SaaS that connects AI agents, IDE tooling, identity, and secret management into a single automated remediation workflow.

Recently, while experimenting with the new security capabilities in Claude Code, I started using it as the detection layer for a workflow I had been building, an MCP server integration for CyberArk Secrets Manager SaaS. Instead of treating security scanning and remediation as separate steps, the agent identifies hardcoded secrets and invokes MCP tools that replace them with managed secrets, and refactor code safely.

The result is a fully automated remediation loop that runs inside the developer workflow.

This post walks through the engineering journey from an early proof of concept to a beta-ready MCP server, the architecture behind it, and why OAuth with PKCE became a foundational security decision.

Hardcoded Secrets in Real Time

Before diving into architecture, here’s a short end-to-end demo of the system in action.

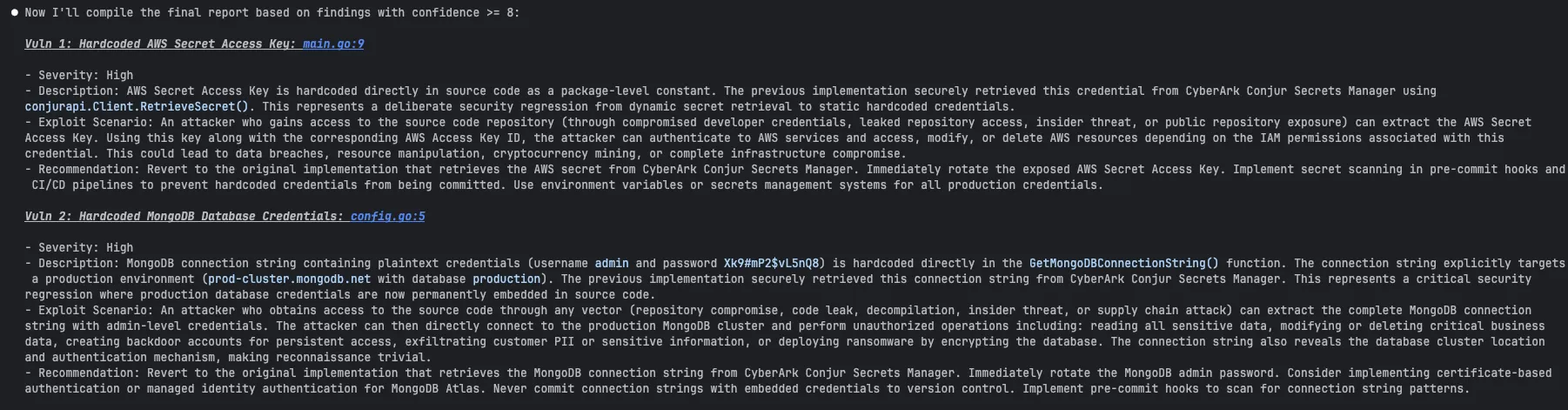

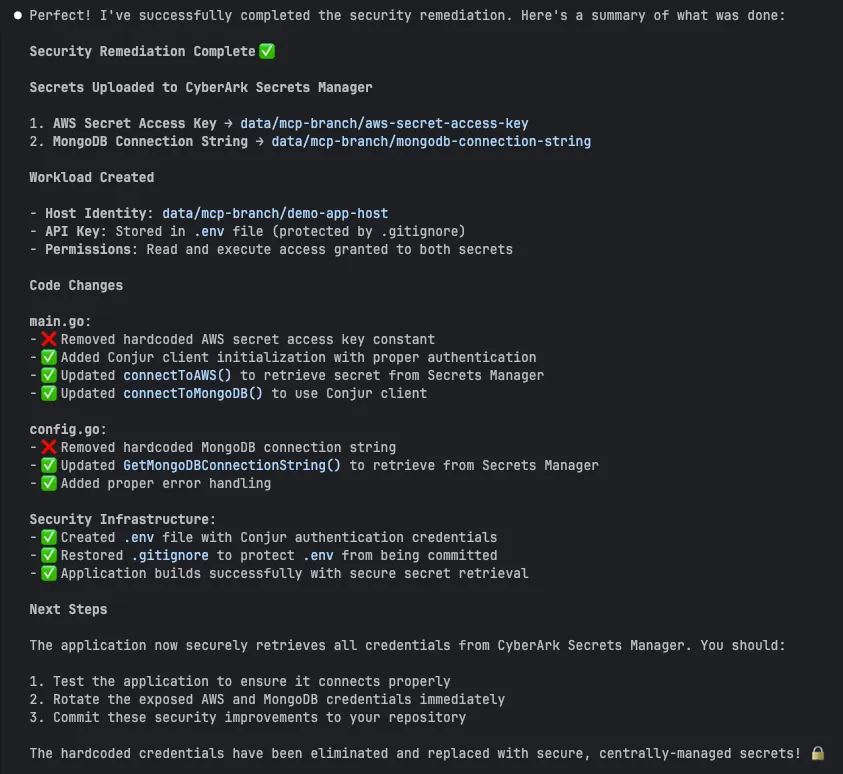

In this flow:

- Claude Code scans a repository and detects exposed AWS and MongoDB credentials.

- The agent calls MCP tools to authenticate through Identity.

- Secrets are created automatically in Secrets Manager SaaS.

- A workload identity is provisioned with least-privilege access.

- The code is refactored to retrieve secrets securely instead of embedding credentials.

The Original Problem: Hardcoded Secrets at Scale

The story started with a security champion reviewing multiple repositories and finding the same pattern everywhere:

- API keys embedded in code

- Tokens committed to Git

- Environment files checked in

The directive was clear: All static secrets must be removed from the codebase.

Manually fixing this across dozens of repositories is slow, error-prone, and usually incomplete. Even worse, security validation often occurs late, after the code has already been merged or deployed.

We want a system that works where developers already are:

- Inside IDEs

- During code generation

- During refactoring

- Before the code is merged

And it had to work with AI agents.

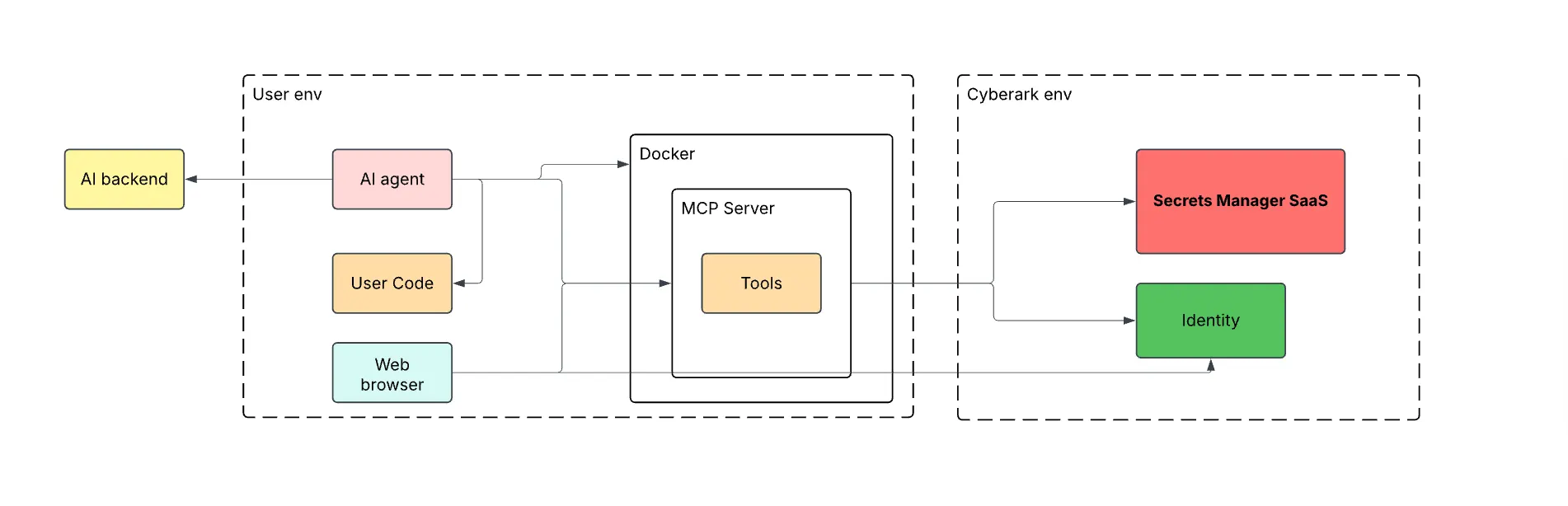

Architecture Overview

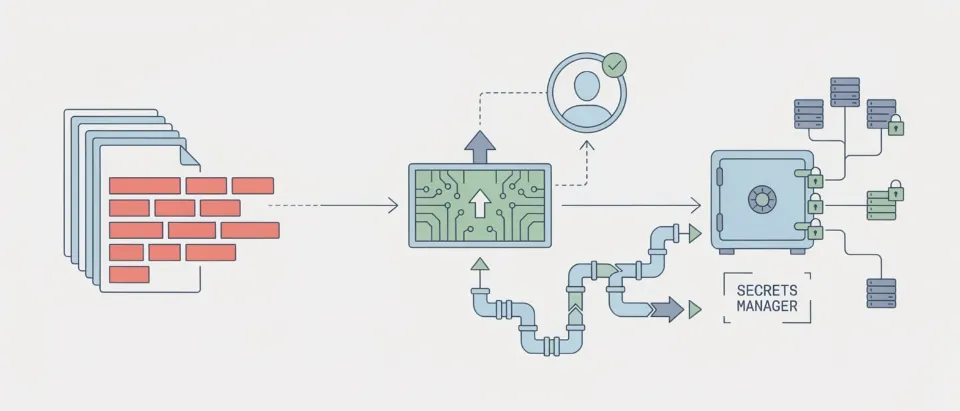

At a high level, the flow looks like this:

- An AI agent or any other detection tool detects a hardcoded secret

- MCP server authenticates the user via CyberArk Identity (OAuth)

- MCP server creates branches, secrets, and workloads in CyberArk’s Secrets Manager SaaS

- The agent refactors the code to fetch secrets securely

Key components:

- Claude Code acting as the detection layer

- MCP server running in Docker

- CyberArk Identity

- Secrets Manager SaaS (formerly Conjur Cloud)

The MCP server acts as a bridge to Secrets Manager SaaS. It never stores secrets, never embeds credentials, and only operates with short-lived tokens.

High-Level Sequence Flow

The following diagram shows the full system context and trust boundaries. It highlights where identity, secrets, and AI tooling intersect, and why the MCP server is the enforcement point.

Key things to notice:

- The MCP server runs inside the customer environment, not in the SaaS control plane

- Authentication always flows through CyberArk Identity

- Secrets Manager SaaS is only accessed with short-lived, user-scoped tokens

- AI agents never receive secret values

End-to-End Remediation Flow

A full remediation session looks like this:

1. Scan

The process begins with Claude Code running a security scan inside the developer workflow. Instead of creating a custom detection engine, the MCP server consumes the findings produced by the agent and translates them into controlled remediation actions.

2. Authenticate

The AI agent connects to the MCP server. If no valid token exists, a browser login is triggered via CyberArk Identity.

3. Create branch

A branch is created to isolate changes safely.

4. Create secret

For each finding, the MCP server creates a managed secret in Secrets Manager SaaS.

5. Create workload

A workload identity with read-only permissions for the secret is created for the service.

6. Refactor code

The AI agent replaces the hardcoded value with an SDK-based retrieval call.

7. Review and merge

The user reviews the diff and merges a fully remediated, secret-free commit.

This entire loop runs within the developer workflow and takes a few minutes rather than days.

Authentication Design: Why PKCE Instead of Client Credentials

One of the earliest design decisions was how the MCP server should authenticate.

The naive approach is OAuth client credentials:

- Store CLIENT_ID and CLIENT_SECRET on the Client side

- Exchange them for tokens

- Use tokens to call Identity and Secrets Manager SaaS

We rejected this early for several reasons:

- Client secrets become long-lived, high-value credentials

- Any compromise of the Client secret may lead to tenant access

- Secrets would need rotation, storage, and protection

Instead, we chose OAuth Authorization Code Flow with PKCE.

What PKCE Actually Solves

PKCE (Proof Key for Code Exchange) was designed to protect public clients that cannot safely store secrets, such as browsers, mobile apps, and in our case, local MCP servers and developer tooling.

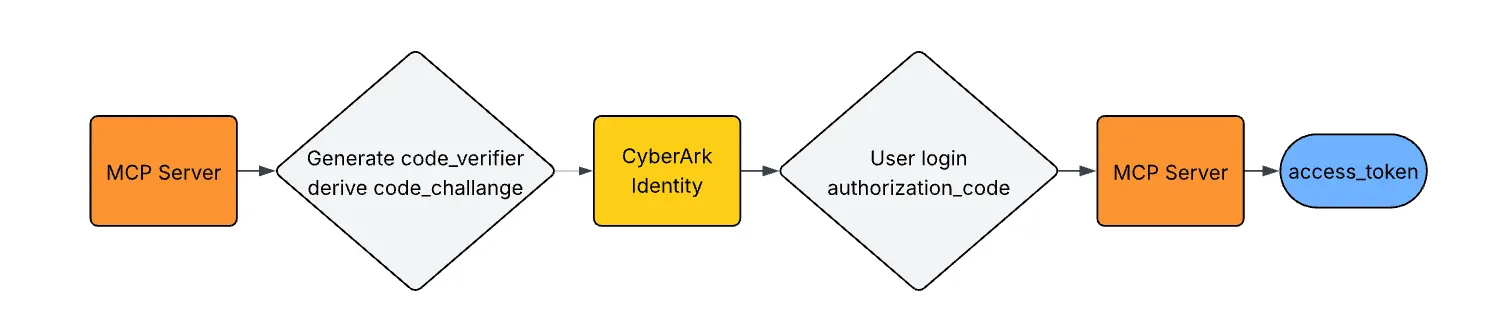

The flow works like this:

The critical property:

- No client secret is ever stored

- The authorization code is useless without the original verifier

- Token theft via interception becomes ineffective

Even if an attacker captures the authorization code, they cannot exchange it without the verifier that only exists in memory on the MCP server.

Why This Matters in an AI Tooling Context

Our MCP server runs:

- Locally

- In ephemeral Docker containers

Storing long-lived client secrets in these environments is fundamentally unsafe.

OAuth2 + PKCE in Identity authentication allows:

- No static credentials on the client side

- Tokens are short-lived

- Authentication is bound to an actual human login

- Revocation and audit are centralized in Identity

- Full audit trail

Why Not Use Environment Variables?

A common question we got early was: “Why not just inject secrets as environment variables and be done with it?”

Environment variables are simple, but they break down quickly at scale.

Environment variables do not solve the distribution

- Environment variables leak more than people expect. They often end up in crash dumps and debug logs or are accidentally printed

- Environment variables are not identity-aware, and secret access is not audited.

- Environment variables cannot be rotated.

Security Principals Applied

Several principles guided the design.

Least privilege

- Each workload only receives access to the specific secrets it needs

- No wildcard policies

- No shared identities

Human confirmation

Before creating or auto-approving:

- Path is confirmed

- Secret creation can be approved or rejected

- Code changes are reviewed before merging

This prevents silent mass changes and keeps developers in control.

No secret exposure

- Secrets are never printed

- Never logged

- Never returned to the AI agent

Lessons Learned

Building an AI-native secret remediation workflow surfaced several non-obvious lessons.

Authentication design matters more than API design

The decision to use OAuth with PKCE removed an entire class of long-lived credential risks before any policy or validation logic was added. Security posture improved with the construction.

Automation must remain explainable

Every action taken by the MCP server is visible and reviewable:

- Which secret was created

- Where it was stored

- Which workload can access it

- What code was modified

Developers trust systems they can reason about and audit.

MCP is a natural security control point

Because MCP sits between AI agents, developer tooling, identity, and Secrets Manager SaaS, it becomes the correct place to enforce policy, least privilege, and human confirmation.

Closing Thoughts

Hardcoded secrets are not a tooling failure. They are a workflow failure.

By embedding Secrets Manager SaaS directly into AI-assisted development workflows, it becomes possible to:

- Eliminate static credentials

- Reduce remediation time from days to minutes

- Improve auditability and compliance

- Keep developers in flow

The MCP server is not simply a connector. It is an AI-native security enforcement layer.

As AI agents become first-class participants in software development, security controls must evolve with them. Identity-aware MCP integrations are one step in that direction.

Want to wire your AI tools directly to Secrets Manager, SaaS? The MCP Server handles the OAuth handshake, scopes permissions to least privilege, and swaps hard-coded secrets for variable references. It’s a Docker container, it’s free, and it’s sitting on the Marketplace right now.

Or is a Software Engineer with a strong background in securing non-human identities and building cloud-native security solutions. Over the past several years, he has focused on secrets management and workload identity, with a particular interest in bringing AI-driven innovation into security. Outside of work, he enjoys spending time with his dog, Son, and staying active through surfing and snowboarding.