Applications need secrets to connect to other machines and services – API keys, SSH keys, session tokens, database connection strings…the list goes on. This is especially true in the cloud, where applications are split between different physical and virtual servers and communication between nodes is vital for the performance of any function.

Environment variables have traditionally been viewed as a convenient and portable way of providing secrets to applications across almost all platforms. However, with the continuously evolving threat landscape, we believe that they should no longer be the default method for providing sensitive values to applications and workloads.

Risks

The nature of environment variables is that they’re global. This means that access to them can’t easily be limited to a specific process – any process running in the user space can access, edit or delete them. If there is a rogue process in the application space, it can dump the environment variables and exfiltrate them.

Additionally, many tools dump the environment for debugging and logging purposes. It is considered bad practice to have sensitive information in logs because they’re generally stored unencrypted and are not secured in the same way as highly sensitive information. See the OWASP Logging Cheat Sheet for more information on this topic.

In Docker or Kubernetes, it’s trivial for an engineer with access to the running pods/containers to dump the environment as well. For example, in Kubernetes they can run kubectl exec <pod-name> -- env, which would print all the environment variables to the console. This violates the principle of least privilege, which dictates that developers should not have access to secrets in production environments.

These risks have recently been recognized by major industry groups. For example, the Cloud Native Computing Foundation (CNCF), the organization that oversees the development of Kubernetes and other cloud-native projects, has released the Cloud Native Security Whitepaper, which includes the following language around secrets management:

“… secrets should be injected at runtime within the workloads through non-persistent mechanisms that are immune to leaks via logs, audit, or system dumps (i.e., in-memory shared volumes instead of environment variables).”

Similarly, OWASP has the following text in its Secrets Management Cheat Sheet:

“… environment variables are generally accessible to all processes and may be included in logs or system dumps. Using environment variables is therefore not recommended unless the other methods are not possible” (Disclosure: I co-authored this section.)

Similar text can be found in the OWASP Kubernetes Cheat Sheet.

Application Secrets Best Practices

There are other options for providing sensitive information to applications that allow for more security, as well as flexibility, than run-of-the-mill global environment variables.

Files

Perhaps the simplest alternative to environment variables is secrets files. While it may seem foolhardy to put sensitive information on a file system, there are reasons this can be more secure than environment variables. Firstly, you can utilize file permissions to restrict access to the file. For example, Unix file permissions 0600 means that only the user who owns the file can read and write it, and others can’t do either.

Memory

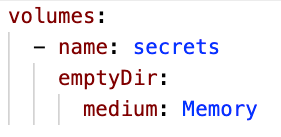

Even better, in ephemeral cloud environments, there are ways of creating what appear to applications to be files but are in fact only stored in memory. These memory-backed “file systems” have the advantages of file permissions and are deleted from memory as soon as an application exits. For example, Kubernetes supports a volume type of “emptyDir” with a medium of “memory” which creates a memory-only volume that only exists as long as a pod is running, as you can see in the code sample below.

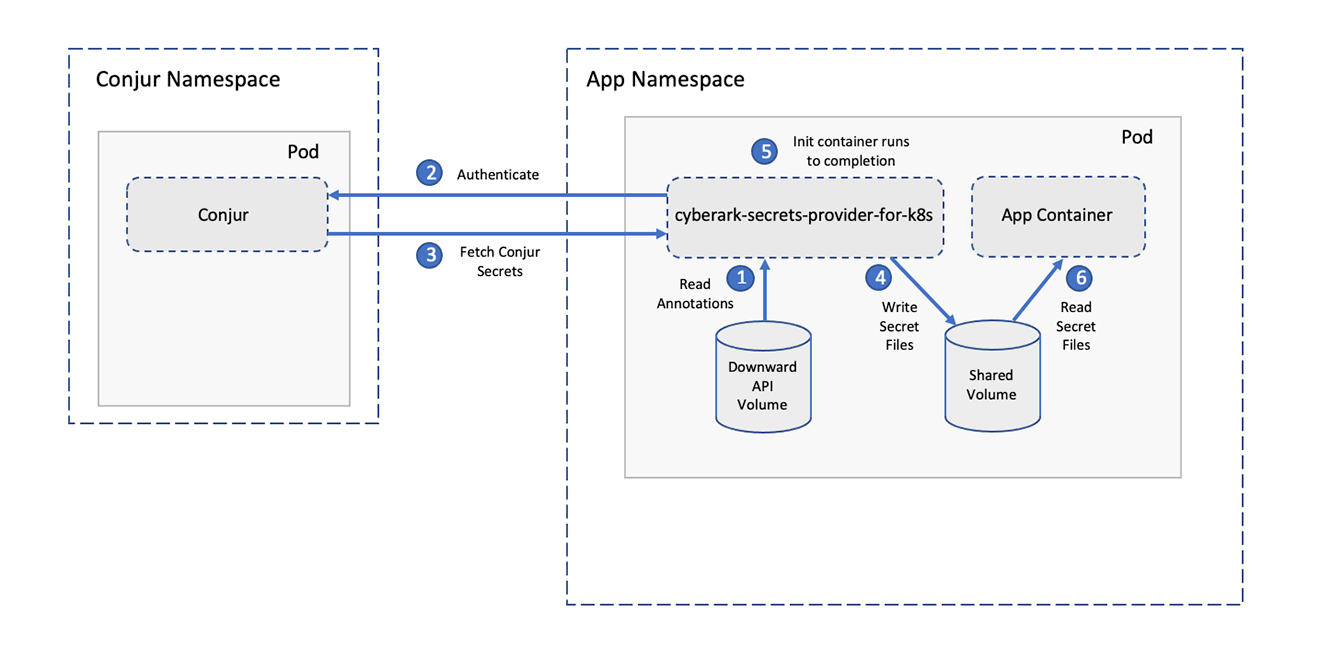

CyberArk’s Conjur Secrets Manager supports pushing secrets into this type of ephemeral volume using Secrets Provider for Kubernetes (Docs), as seen in the image below.

When You Must Use Environment Variables

It’s worth mentioning that in some cases you will have no choice but to use environment variables. For example, there are legacy applications that can only consume configuration values through environment variables. In these cases, there are steps that can be taken to improve your security posture while still using environment variables.

The first consideration is where the values for environment variables are stored. You should not hard code them in a Dockerfile or Kubernetes YAML file for example, as this would make them hard to manage, secure, audit and rotate, and would allow access to anyone who has the source code, including developers who shouldn’t have access to production secrets. A secrets manager like CyberArk Conjur offers a secure vault for storing secrets in a central location where security teams can manage, rotate and audit secrets as well as restrict access using role-based access controls (RBAC).

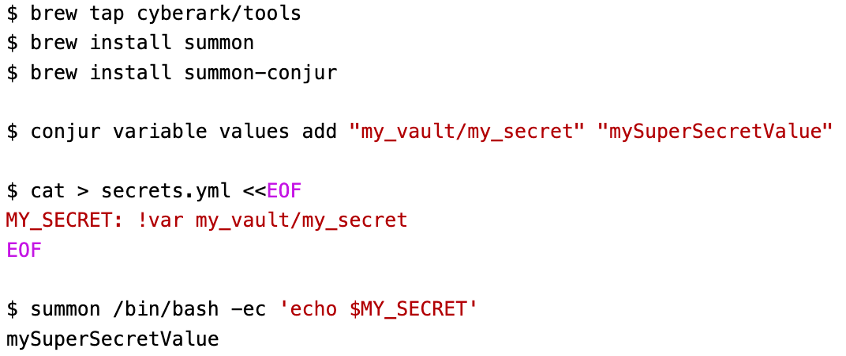

The second consideration is when you are providing the values to the running application. You will want to limit the availability of the environment variables to the specific process that needs them rather than providing them globally, which would expose them to any process running in the same space as your application. CyberArk’s Summon allows you to pull secret values from a secret store such as Conjur (among others) and inject them into environment variables that will only be accessible to a specific process, while other processes will not have any visibility into them. Here’s an example that shows running a script that prints out a secret value that is fetched dynamically from Conjur:

Conclusion

While you may not always be able to avoid using environment variables to provide secrets to applications, there are steps you can take to use more secure methods when possible or to better protect the environment variables when it is not. With a centralized solution like Conjur Secrets Manager, you can better secure your secrets across your environments and help enable application security across your organization.

References

- CNCF, “Cloud Native Security Whitepaper v2,” October 2022.

- OWASP, “Secrets Management Cheat Sheet.”

- OWASP, “Kubernetes Cheat Sheet.”

- NillsF, “Don’t use environment variables in Kubernetes to consume secrets,” 24 February 2020.

- Diogo Mónica, “Why you shouldn’t use ENV variables for secret data,” 27 March 2017.

Shlomo is a staff software engineer at CyberArk working on Conjur Secrets Manager. He’s an open source and AppSec enthusiast, a member of the CNCF TAG Security and a contributor to multiple OWASP projects. In his free time, you can find him spending time with his wife and daughter, 3D printing, woodworking or hiking.