AI agents are no longer passive tools but are becoming trusted digital coworkers. They can fetch documents, schedule meetings, query APIs, and even make decisions on your behalf. But as they take on real responsibility, who are they acting as, and what happens when something goes wrong?

Unlike traditional software, these agents operate with a mix of autonomy and delegation. That makes identity and access control a security concern and a business-critical question. If your AI agent accesses private data or interacts with other systems, how do you ensure it’s doing so with the proper authority and nothing more?

In this post, we’ll unpack how defining, delegating, and enforcing AI agents’ identities can protect your organization, enable safe collaboration, and unlock new capabilities without compromising control.

The Risk of Delegation in AI Agents

Delegation is powerful but dangerous if unchecked. We risk losing visibility and control when we grant AI agents the ability to act on our behalf. An agent with excessive or vague authority can accidentally leak data, trigger unintended actions, or become a vector for lateral movement in systems.

To delegate safely, we must bound what agents can do, on whose behalf, and under what conditions, just like we do with human users or service accounts. This means designing granular, auditable, revocable delegation forms that align with identity and intent.

A standard solution is delegation tokens, scoped credentials that let agents act on behalf of a user or another agent. This approach, formalized in RFC 8693, enables layered identity and structured token exchange.

But issuing a token is just the beginning. The real challenge lies in the authorization decision: Who decides what the agent can do? Under what context? And how is that decision enforced and audited? Without strong, intentional authorization controls, delegation becomes a blind trust, opening the door to misuse, escalation, or unintended actions.

Delegation via Token Exchange (RFC 8693)

Before allowing one system or agent to act on behalf of another, you should consider how to represent both identities securely. Token exchange makes this possible by creating a new token that captures the relationship between the original subject and the acting party.

- Subject Token: Represents the original identity (e.g., the user or client).

- Actor Token: Represents the acting party (typically a service, AI Agent, or application acting on behalf of the subject).

The resulting delegation token includes both identities, allowing the agent (actor) to act on behalf of the user (subject).

Imagine this:

- Alice is a user.

- An AI agent, let’s call it Agent A, wants to contact Service B on Alice’s behalf (for example, to book a flight for her).

Steps:

- Alice authenticates, and her identity is turned into a subject token.

- AI Agent A, acting on Alice’s behalf, has its actor token.

- It requests a delegation token from the auth server by submitting both tokens.

Token Exchange Request

POST /token HTTP/1.1 Host: auth.example.com Content-Type: application/x-www-form-urlencoded grant_type=urn:ietf:params:oauth:grant-type:token-exchange &subject_token=eyJhbGciOiJSUzI1NiIs... (Alice's token) &subject_token_type=urn:ietf:params:oauth:token-type:access_token &actor_token=eyJhbGciOiJSUzI1NiIs... (AI Agent A's token) &actor_token_type=urn:ietf:params:oauth:token-type:access_token &requested_token_type=urn:ietf:params:oauth:token-type:access_token &audience=https://service-b.example.com/

Token Exchange Response

{

"access_token": "eyJhbGciOiJSUzI1NiIsInR5cCI6IkpXVCJ9...",

"issued_token_type": "urn:ietf:params:oauth:token-type:access_token",

"token_type": "Bearer",

"expires_in": 3600

}

The returned token is a delegation token, which embeds both subject and actor identities.

e.g.,

{

"sub": "alice",

"act": {

"sub": "ai-agent-a"

}

}

This allows Service B to recognize that the request is being made by AI Agent A on behalf of Alice.

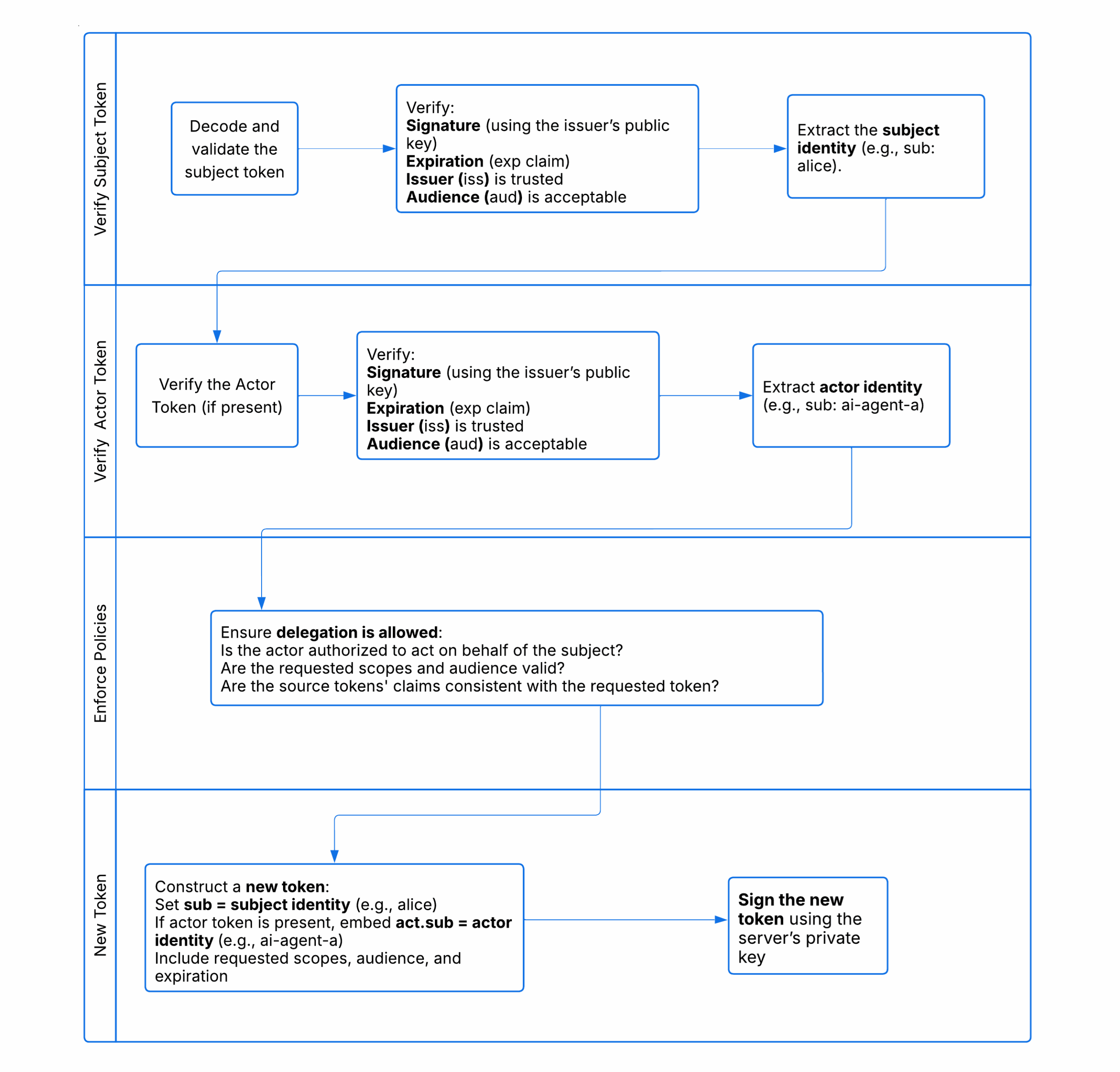

What Happens During a Token Exchange

The authorization server performs several checks and steps to issue a new token safely.

Constraints of Delegation Tokens and Permission Boundaries

Although OAuth 2.0 token exchange supports robust delegation use cases, the delegation token produced does not automatically enforce or restrict the original authorization scope of the subject or the actor token.

It means that when you use OAuth 2.0 token exchange to let one party act on behalf of another, the new token you get (the delegation token) doesn’t automatically limit what that party can do based on the original permissions of either the user (subject) or the acting agent (actor). In other words, the delegation token might allow different or unintended access unless additional restrictions are implemented.

Can we make educated or complex access decisions using the OAuth2 federation?

When using OAuth2 federation, systems typically make simple, fixed decisions based on specific token details like a user ID or audience value. For example, AWS IAM’s OIDC federation grants access by matching static token claims to predefined roles without any advanced logic or analysis of the token’s content. This approach is intentional to keep the process straightforward and secure.

So, while OAuth2 federation enables identity delegation, it does not support making detailed or dynamic access decisions based on complete token information. AWS is a good example of simple, rigid federation mapping, focusing on exact matches rather than complex, context-based authorization.

What privileges should a delegation token have?

A simple way might be to give the delegation token only the permissions that the user (subject) and the AI or system (actor) have in common.

But this isn’t always the correct answer.

For example, the Australian government requires users to prove their age to access some social media sites, which can accidentally block AI agents from using those platforms. Instead of blocking AI completely, platforms could allow AI agents to act on behalf of verified users under strict rules. This means the AI represents a real user, can do tasks like checking friends or security settings, and must follow platform policies.

In this case, the delegation token shouldn’t just reflect the overlap of user and AI permissions; it needs to reflect controlled, trusted access for the AI acting for the user.

A delegation token may exceed the scope or resource access allowed initially to the subject or the actor individually.

Delegation Token. If not carefully constrained, an actor (e.g., AI Agent A) could leverage its role to escalate privileges by requesting access that the subject (e.g., Alice) would not usually have or vice versa. Jailbreaking an AI agent is amplifying the threat caused by unconstrained delegation tokens.

Also, no automatic enforcement ensures that the delegation respects least privilege or original intent; it can potentially perform unintended or unpredictable operations or move laterally inside the target system.

Policy Engine to Assist

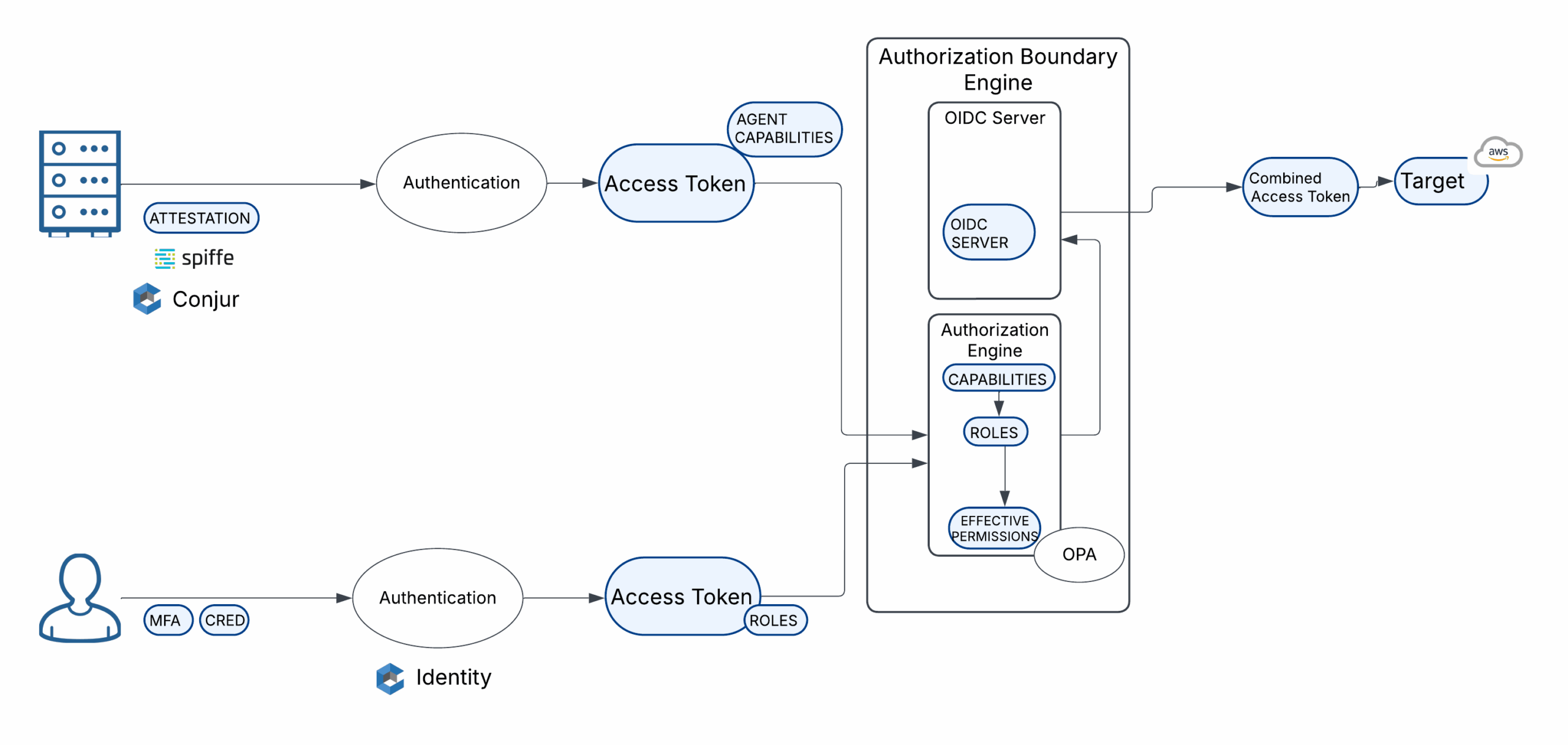

Open Policy Agent (OPA) is an open-source tool that helps organizations enforce fine-grained, flexible access control policies. Instead of hardcoding rules, OPA lets you write policies in a simple, declarative language and evaluate them anywhere in your app, API, or infrastructure. It makes dynamic, context-aware authorization decisions beyond basic static checks.

We integrate OPA to enforce fine-grained, dynamic authorization decisions on delegation tokens, representing the combined identity of a human user and an AI agent. When a delegation token includes the human subject (sub) and the acting AI agent (act), OPA can evaluate this context against real-time customizable policies.

Policies can specify what actions an AI agent can perform on behalf of specific users according to agent capabilities (e.g., booking a flight ), what scopes are permitted, and which resource access is valid under delegation. Using OPA, the system gains a flexible and auditable way to ensure that AI-driven actions stay within the intended boundaries of human consent and platform governance.

Example: OPA Authorization Apply Request

{

"input": {

"actor": {

"claims": {

"Capabilities": "book_flight"

}

},

"subject": {

"claims": {

"Department": "Operations",

"Role": "Director"

}

}

}

}

Just-In-Time (JIT) Access means giving access to something only when it’s needed, and only for as long as necessary, no earlier, no longer.

In security (and often in OAuth or cloud systems), JIT access helps:

- Reduce risk by minimizing how long someone (or something) holds sensitive permissions.

- Limit exposure if credentials are compromised.

- Enforce least privilege dynamically, not permanently.

Just Enough Access (JEA) means giving the minimum permissions necessary for someone (or something) to do their job no more, no less.

It’s about shrinking the blast radius if something goes wrong:

- If a person or AI agent only has “read” permission, they can’t accidentally (or maliciously) delete things.

- If an AI agent only has access to a folder, it can’t snoop around the whole system.

The response is dynamic, and the decision to assign a role to the target system is made in time. In this case, we choose a least-privilege role that only allows access to the needed resources. We then create a short-lived token that gives the AI agent Just In Time (JIT) access so it can perform just the required action based on what its token can do and what resources it can access (Just Enough Access—JEA).

Wrap-up:

Using this approach, we set clear and strict limits on what an AI agent can do. Instead of relying on simple fixed rules, we dynamically control the agent’s actions and access to resources based on the user’s original permissions combined with the agent’s roles, capabilities, and any other policies or context we define. This ensures safe, precise, and accountable delegation.